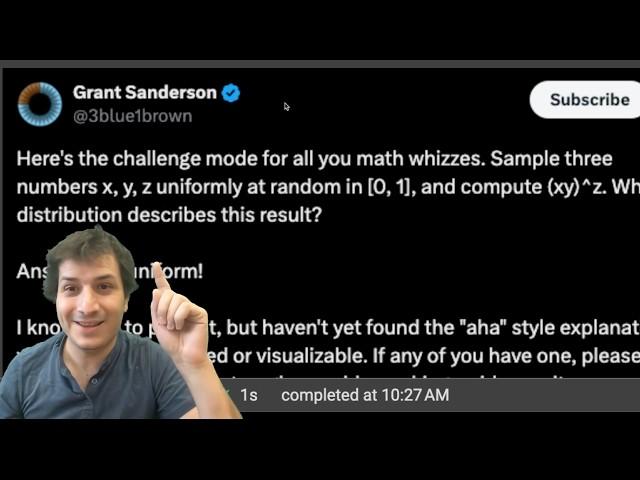

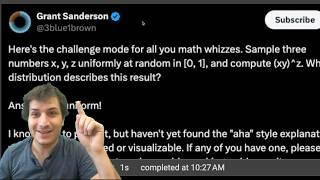

3Blue1Brown's Probability Challenge Solved!

Комментарии:

Nice

Ответить

Could I ask what's the name of the background track?

Ответить

I really like your background music, it's very calming.

Ответить

How's your dad doing?

Ответить

Awesome video

Ответить

Is it true that f(U) is f inverse? Here, we have ln(U) = E, and the related problem from parker, sqrt(U) = U^2

Ответить

I've been doing Python for years. TIL you can use _s as separators for integers

Ответить

Now I'm wondering: under what conditions on a distribution D does it hold that Z = U(X + Y) for U ~ Unif(1) and X, Y, Z ~iid D? On the face of it this sounds like something that might be true for more distributions than just D=Exp and D=Geom

Ответить

Great presentation. Loved your energy. Subed.

Ответить

The way you explained it, it made me think of the "memorylessness" property of Exp and Geom. And you do allude to it in the sketch of your proof, but no longer need it when you actually go about proving it. Very fascinating fact, and a most elegant proof!

Ответить

I think your method is more "intuitive" than mine, in the sense you have a combinatorial perspective, but still more complicated.

I feel still that the best explanation is one of merely calculation:

P(XY ≤ r) = r - r log(r) (easy by integral)

Set W = XY,

P(W^Z ≤ r) = int_0^r (1 - log(r)/log(w))(-log(w))dw

Where 1 - log(r)/log(w) comes from area on new chart w by z, we know w≤r in our area of study for integration limit, and (-log(w)) comes from the PDF of W.

Of course, determining this as "easy" comes from existing notions of a student who took some kind of probability class. Other than that, really simple integrals to actually perform as long as you know your limit xlog(x) for x -> 0 from the right.

Thank you. It's given me ideas for my own projects Python and R code backed up with "blackboard" presentation of Mathematics in my case Group theory

Ответить

Nice, video. Is the last limit you took the limit in distribution (I mean there're a lot in probability a.s. in probability in Lp...)

Ответить

Oh wow, really cool! I like your video style, very calm.

Ответить

Alsmost looks very similar like the comparison sqrt(rand0) vs max(rand1, rand2) EDIT: see: v=ga9Qk38FaHM

Ответить

If I assume x,y and z to be equal to x (given that they are 3 random uniform variables) I get:

(x*x)^x = x^(2x)

And because x is on average 0.5

x^(2x) ≈ x

And because x is uniform then x^(2x) is uniform.

Is there anything wrong with this reasoning?

I love your proof so much as it connects different distributions in a very intuitive way. Thank you for your presentation. I can sleep well now.

Ответить

Adding two exponentials will double the outcome. Then multiplying this with a random uniform scalar between 0 and 1 will on average half the result.

Ответить

I found the step of comparing the distributions the most unintuitive step - I don't find it obvious until I crunch the number.

I think a 3b1b-style animation of creating "U(G1+G2)", representing the probability distribution as points on a number line with regions of equal probability between them, and then comparing it visually to the original G, would really help.

Great video! Nice to see a proof without invoking a bunch of integrals

Ответить

Just asked this from OpenAi O1 preview model and it solved it in 10 seconds :-/

Ответить

Can GPT o1 answer this question?

Ответить

this is pretty cool! a stupid question, im sorry: the python code at the start, that was run as a jupyter notebook inside of vscode?

Ответить

I've spent a lot of time on this problem now to get the best solution.

Here is my best one so far:

if we have Z = U(E1 + E2) where U is uniform and E1 and E2 are exponentials, then E1 + E2 is erlang with density xe(x), but the density of Z conditioned on E1 + E2 = x is 1/x as long as 1/x in the region of [0,x), so when you compute the marginal density of E1 + E2 you have a very easy expression where x cancels out with 1/x.

Now what I want to do is to make use of the fact that U(E1 + E2) is identically distributed as (1-U)(E1 + E2) as well as the fact that E1 + E2 = U(E1 + E2) + (1 - U)(E1 + E2). Now if we were to show that U(E1 + E2) is independent of (1-U)(E1 + E2) then we'd basically be done by appealing to the characteristic functions. But my conjecture is that the fact that U(E1 + E2) is identically distributed as (1-U)(E1 + E2) (as well as the fact that the sum is equal to the sum of iid E1 and E2) by itself implies independence. If so, then this would be a very nice proof.

this does mean that out of the cube that the values x,y,z∈[0,1] generate in 3d space, the surface (xy)ᶻ=t has the same surface area for all t∈[0,1]

Ответить

I think considering the moments is the easiest way to do this. Takes about 30 seconds to show that Exp((xy)^(zt))=1/(1+t), which are the moments of a uniform distribution

Ответить

@3blue1brown this is really cool i hope you see it

Ответить

I think it’s perhaps simpler to ask about the conditional distribution of E1|E1+E2. Clearly it’s uniform on 0 to E1+E2 since it’s generated by a poison point process. So the distribution of E1 must be the same as the distribution of E1+E1 times a 0,1 uniform RV by virtue of the definition of conditional distributions and uniform RV scaling, which is what we wanted to show.

Ответить

I think it’s perhaps simpler to ask about the conditional distribution of E1|E1+E2. Clearly it’s uniform on 0 to E1+E2 since it’s generated by a poison point process. So the distribution of E1 must be the same as the distribution of E1+E1 times a 0,1 uniform RV by virtue of the definition of conditional distributions and uniform RV scaling, which is what we wanted to show.

Ответить

This popped in my YT feed as I was taking a break from some mind numbing and exhausting grinding trying to derive an exotic probability distribution in biophysical chemistry; progress is slow.

Thumbnail definitely stopped my scrolling, almost on reflex upon reading “distribution” (I’m married to my work… and it’s an abusive relationship 😢). As I was reading the prompt on the thumbnail, I was going “oh this is eas…. ONE OF THE RVS IS IN THE EXPONENT? 🤢🤢🤢”

Had to click on the video; I guess my break from probability is… more probability? Does this make me a gambling addict? …

At any rate, then you say “it’s Uniform!” And I see it on the screen… and I’m like “PROVE IT MOTHERFKKEHAVAU-888”

Having my expectations raised and getting all demanding and wanting a proof, you proceed to open up a colab notebook, import some Python and go “did 10 million samples”….

I don’t think I’ve been this satisfied with the “rest of the world” in a while; I came looking for silver and I found gold! Now I’m gonna have a smoke, finish my break, and sample myself some 10million reasons to go to bed with victory !

… oh shit, you actually go on and do a proof! 😮

Not gonna lie; not as tasty as sealing the deal with a couple of Jupyter cells in 2mins 😂 I guess I’m a subscriber now

In case somebody wants to try it on R:

n = 10000

x <- runif(n)

y <- runif(n)

z <- runif(n)

hist((x*y)^z)

You can google R online to just copy, paste and run it.

following this reasoning, shouldn't be also (WXY)^Z uniform as well? and that is not the case...

Ответить

awesome explanations :D

Ответить

nICe

Ответить

it's not exactly the same, you are going to wind up with issues at 0^0 which could come up.

Ответить

so fun!

Ответить

Cool. Took probability last year at uni and - even though I didn't fully follow - it was a nice refresher. Thanks :)

Ответить

Great video!

Ответить

Uhm. When Z=0 the answer is 1? Does not this throw off the distribution?

Ответить

Wonderfully done, thank you!

Ответить

though it was Alfie

Ответить

Time to roll the dices till i get 0^0

Ответить

Are you from Romania?

Ответить

He. He wrote. Grant is a man.

Ответить

Gotta ask, what tool are you using to draw those notes? iPad? Or a Mac app?

Ответить

I love this form of teaching !!!

Ответить

Haven’t seen the whole video yet, but since 1^z and 0^z have the same uniform distribution and 0 and 1 covers the whole domain, it is intuitive that a^z has the same distribution as just z (which is uniform), isn’t it?

Ответить

Very nice, I love it!

Ответить

![Filatov & Karas vs Мумий Тролль - Amore Море, Goodbye [Official Video] Filatov & Karas vs Мумий Тролль - Amore Море, Goodbye [Official Video]](https://smotrel.cc/img/upload/TVFRSGZaMkdHazU.jpg)